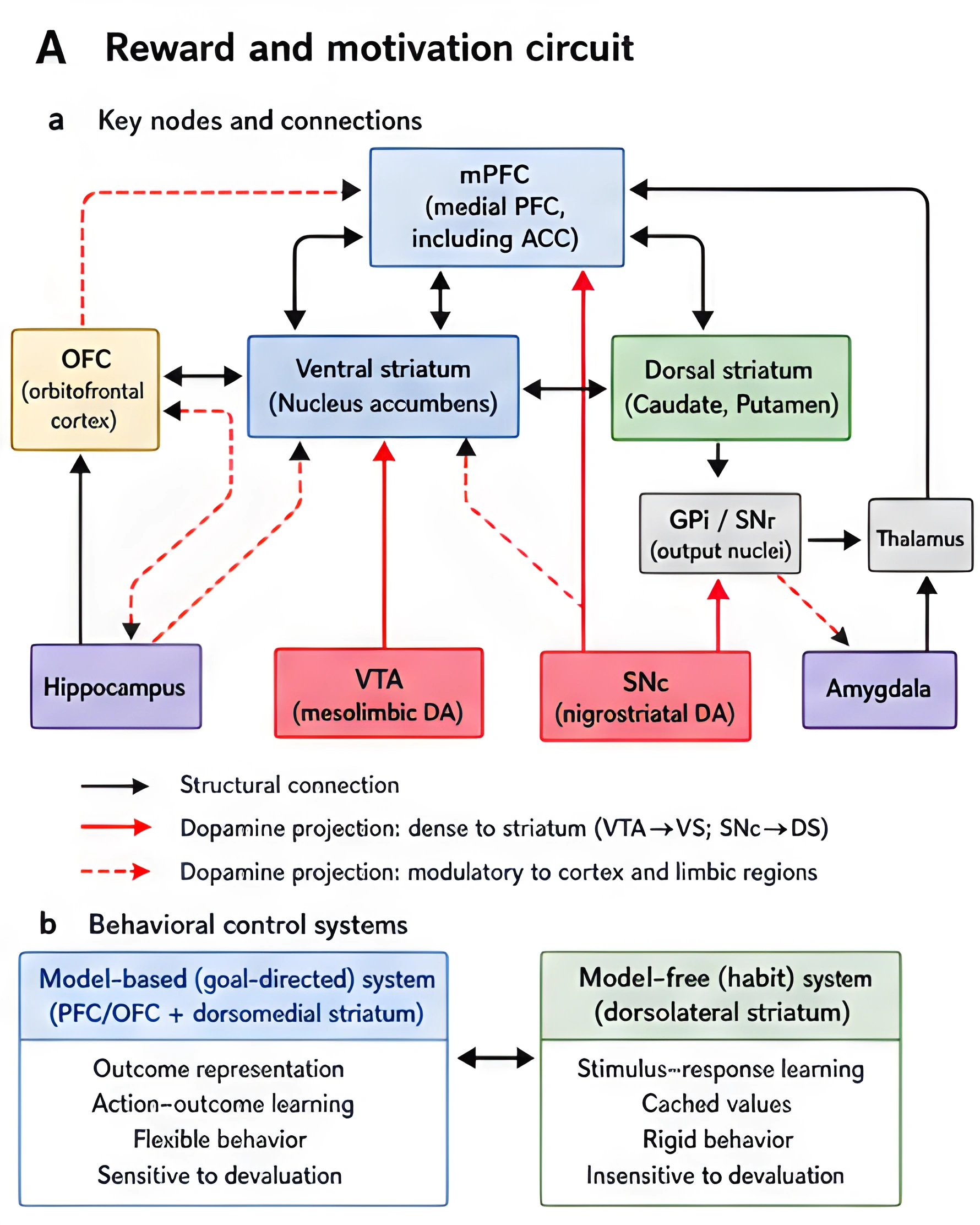

The dopamine reward pathway is not one tiny pleasure switch. It is a connected reward circuit centered on midbrain dopamine neurons and their projections into the ventral striatum, nucleus accumbens, prefrontal cortex, and related limbic regions [3], [6], [11]-[13].

Its job is not to manufacture enjoyment in isolation. The brain reward pathway helps the brain assign salience, update expectations, and reinforce behavior, which is why it sits at the center of learning, motivation, and habit formation [1]-[6].

That is also why it matters in addiction. The same circuitry that helps us learn from food, sex, novelty, achievement, and social reward can be trained by substances and high-intensity behaviors. Over time, the dopamine reward system can become biased toward cue-driven wanting, while ordinary rewards feel weaker by comparison [2], [4], [5], [7]-[10].

When people say "reward center of the brain," they are usually pointing toward a network that includes the ventral tegmental area, nucleus accumbens, ventral pallidum, prefrontal cortex, amygdala, hippocampus, and related striatal and limbic structures [3], [6], [11], [12].

The ventral tegmental area, or VTA, is one of the best-known sources of dopamine neurons in the midbrain. Those neurons project heavily to the nucleus accumbens and other parts of the ventral striatum, where dopamine helps shape reward learning and motivational salience [3], [6], [11], [13].

But the pathway is not one-way. It is a loop. Cortical regions send top-down signals that influence what the reward system notices, while limbic regions contribute context, memory, and emotional meaning. In practice, that means the entire dopaminergic system is not simply registering pleasure. It is integrating:

That architecture is why the same pathway supports both healthy learning and compulsive repetition [2]-[6].

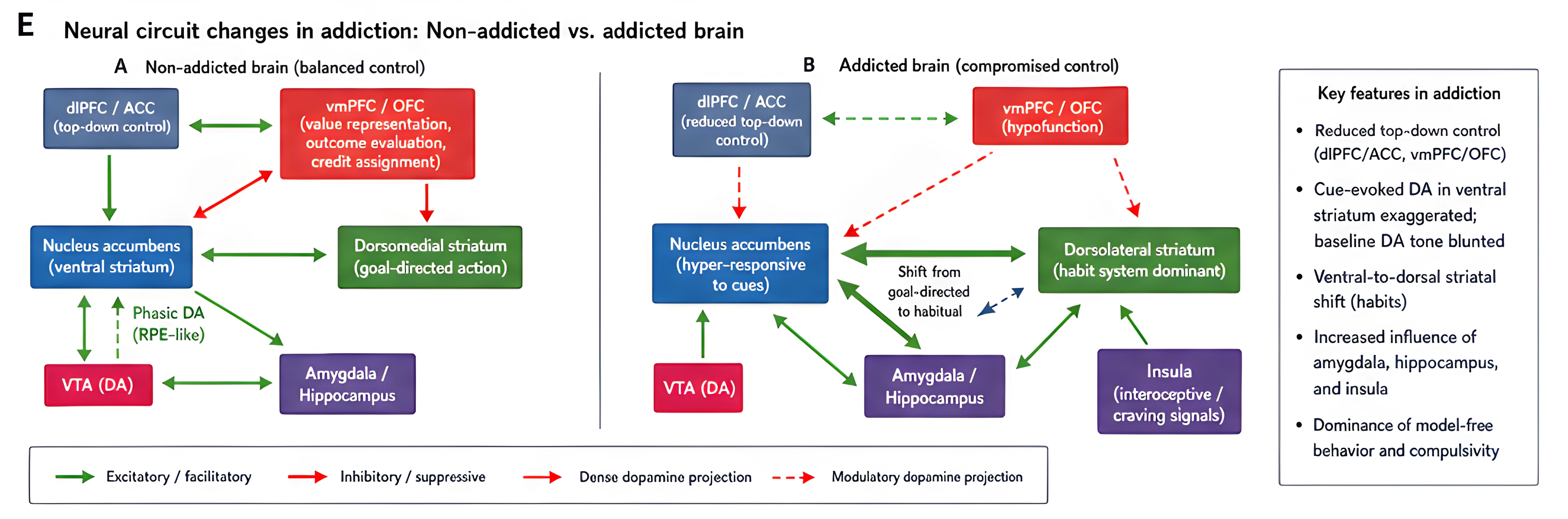

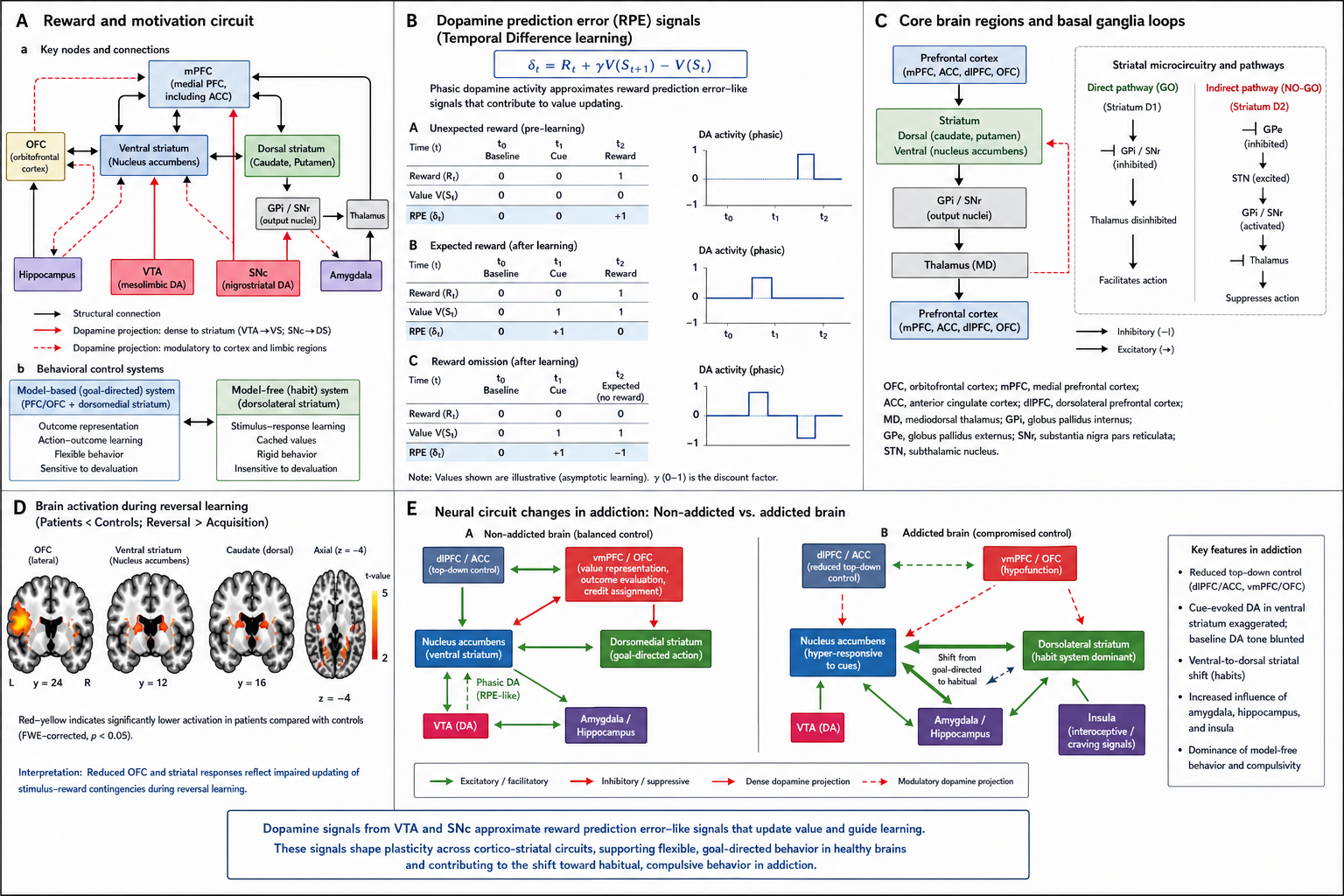

This first figure maps the core nodes of the dopamine reward pathway. It shows the VTA, striatum, prefrontal cortex, and related loops so the rest of the article has a concrete circuit to refer back to.

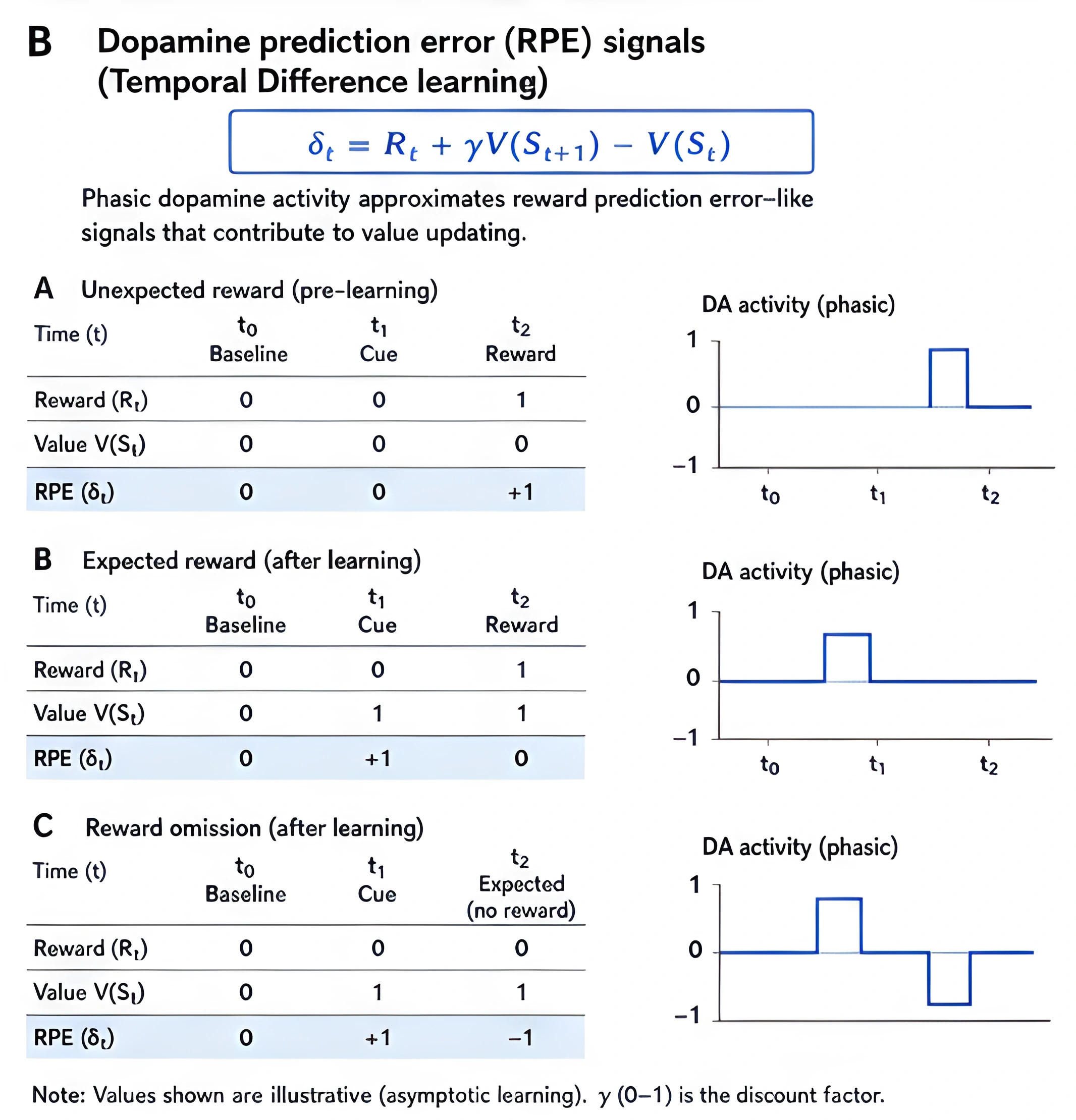

The old shorthand says dopamine equals pleasure. The better version is that dopamine participates in learning what is important and what is worth pursuing [1]-[3], [11].

Taber and colleagues separate reward into liking, wanting, and learning. That distinction is useful because dopamine does not map neatly onto only one of those terms. It contributes strongly to wanting and learning, while hedonic pleasure involves a broader network of opioid, glutamatergic, and other circuits too [3].

Keiflin and Janak's review is especially helpful here. They describe dopamine as a reward prediction error system: dopamine responds when outcomes are better or worse than expected, and those signals help update future behavior [2]. In other words, dopamine helps the brain ask:

The tighter scientific version of that idea is reward prediction error. That makes dopamine less like a pleasure meter and more like a teaching signal.

This second figure shows the reward prediction error idea in a simple before-and-after form. It makes the learning point visible: dopamine shifts from the reward itself to the cue once the brain has learned the pattern.

Di Chiara and Bassareo make a similar point from a different angle. Their review emphasizes that dopamine matters for incentive learning and cue-controlled motivation, not just for raw pleasure [1]. That is important because addictive behavior usually becomes most powerful when cues start to carry more weight than the actual reward.

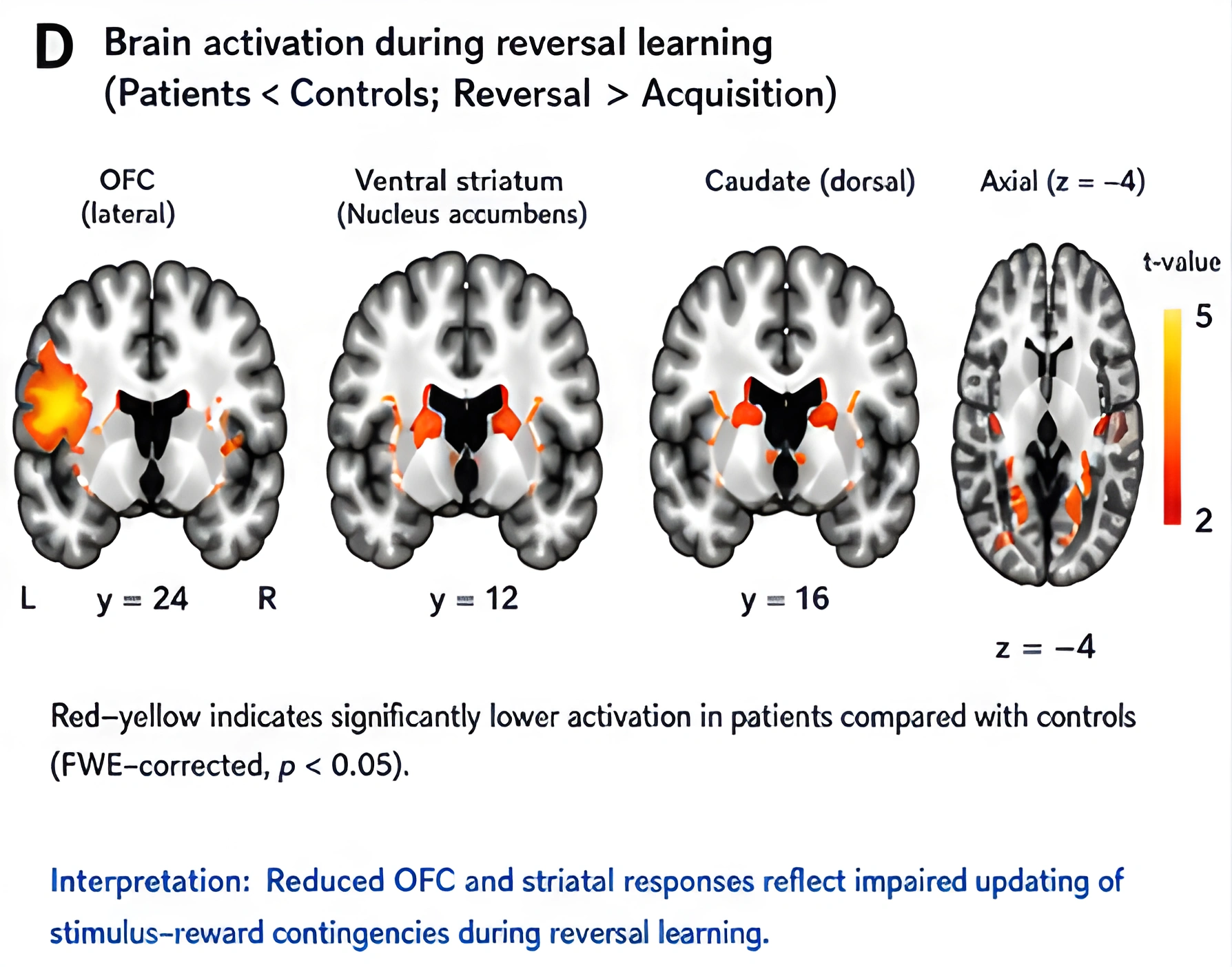

Addictive substances and high-reward behaviors can exploit the same learning machinery that guides ordinary reward. Volkow and colleagues show that drugs can produce fast dopamine changes that reinforce behavior, but addiction is not simply a matter of "too much dopamine" [4]. In many addicted states, direct reward responses are blunted, while cue-triggered responses remain strong or become stronger [4], [5], [7].

That is one reason people can keep seeking a behavior even when it no longer feels especially rewarding. The cue has become the trigger.

The literature on cue-reactivity makes this very concrete. Starcke and colleagues show that behavioral addictions such as gambling, gaming, and buying are associated with stronger responses to addiction-relevant cues across subjective, physiological, and neural measures [7]. Similar cue-driven dynamics appear in drug, gambling, food, and sexual reward studies, which suggests that the brain can learn to assign strong motivational force to predictive signals themselves [7]-[9].

This third figure summarizes cue-reactivity across reward types. It reinforces the point that triggers, not just outcomes, can drive the reward system once the circuit has learned the association.

That is the practical meaning of "dopamine reward pathway" in addiction:

That loop can become so strong that the person experiences compulsion more than pleasure.

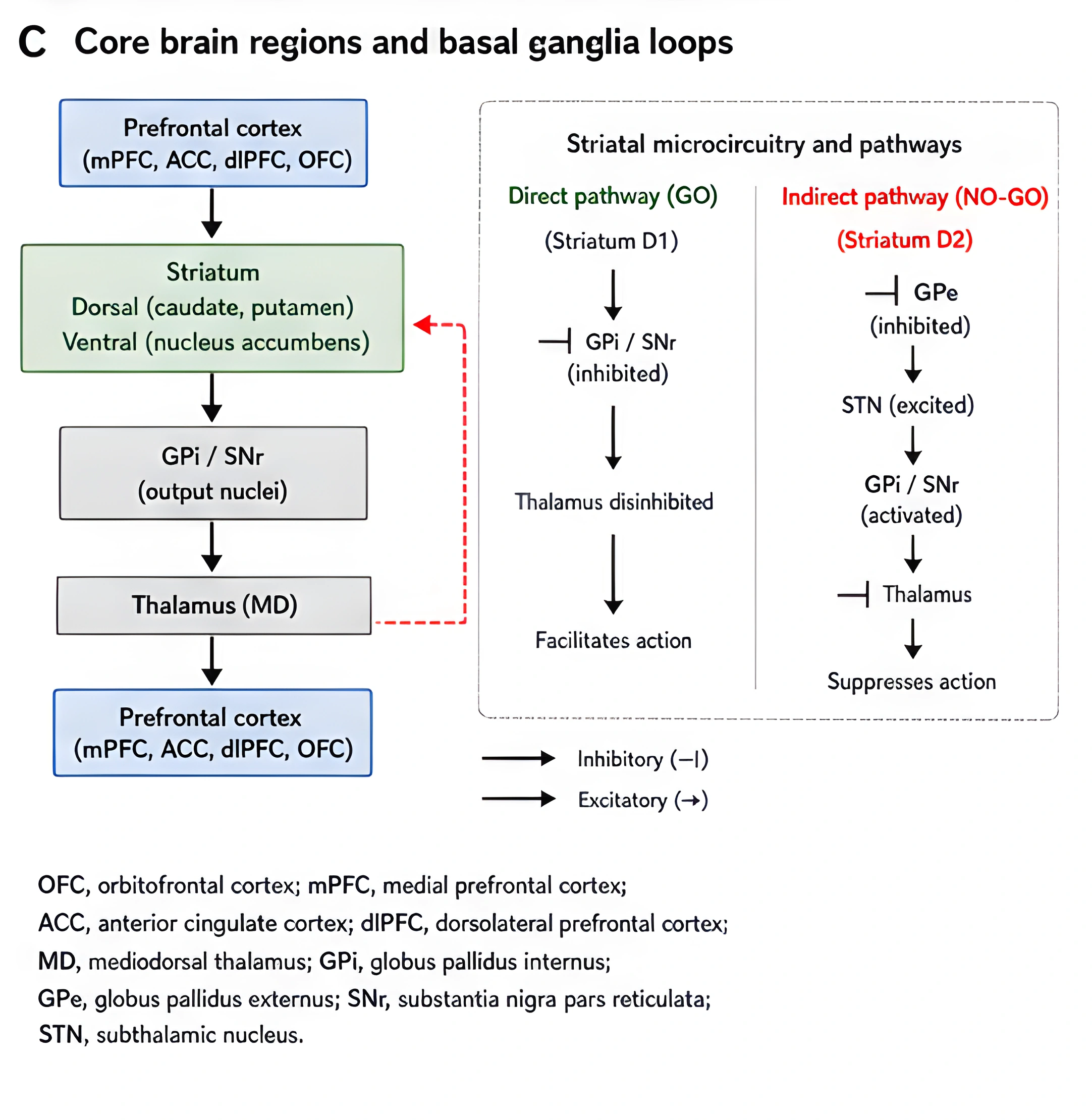

One of the most common mistakes in dopamine writing is to treat dopamine as if it acts alone. It does not.

Reward circuitry in addiction is distributed across a network. Cooper, Robison, and Mazei-Robison describe the VTA-to-nucleus-accumbens pathway as only one node in a broader system that includes glutamatergic input from prefrontal, amygdala, hippocampal, and thalamic regions [6]. Their review is useful because it keeps the article from oversimplifying addiction into a single neurotransmitter story.

Volkow and colleagues go further and show that addiction is really a circuit imbalance involving reward, conditioning, motivation, and executive control [4]. That helps explain why cue-driven seeking can stay strong even when direct drug reward weakens.

The key implication is that the brain does not "decide" in one place. It computes salience, memory, emotion, and control across linked circuits. The reward pathway is central, but it is central inside a network.

Repeated exposure does not just make a reward familiar. It changes what the brain learns to prioritize.

Koob and Le Moal's allostasis model explains addiction as a chronic reward dysregulation process in which positive reinforcement gives way to stress activation, reward deficit, and a shifted set point [10]. Koob and Volkow's later neurocircuitry model organizes that progression into binge/intoxication, withdrawal/negative affect, and preoccupation/anticipation [11].

Together, those models help explain why the reward pathway can become overtrained:

That shift is not just psychological. It is circuit-level plasticity.

Keiflin and Janak's work on prediction error helps explain the learning side [2]. Cooper and colleagues help explain the microcircuit side [6]. Volkow and colleagues help explain the broader systems side [4]. Koob and Volkow help explain the stage progression over time [11].

The result is a reward system that keeps predicting and seeking the same thing, even when the person is trying to stop.

One of the most important ideas in addiction science is that reward-seeking can move from flexible goal-directed behavior toward more automatic habit-like responding.

The ventral-to-dorsal shift described in the prediction-error literature is useful here [2]. Early behavior is more likely to be driven by outcome value and immediate reward. Later behavior can become more cue-driven and more habitual, with stronger involvement from dorsal striatal systems [2], [4], [8], [11]-[13].

That shift helps explain a common recovery experience:

That is not a failure of willpower alone. It is a learned circuitry problem.

The habit-formation literature points in the same direction [14]. Repetition makes behavior easier to initiate, less dependent on reflection, and more tightly bound to context. Once that happens, the cue itself can feel like a command.

This fourth figure shows the ventral-to-dorsal shift that makes repeated reward seeking look more automatic over time. It helps explain why the behavior can become harder to interrupt even after the reward has lost value.

If the reward pathway has been overtrained, recovery should not be framed as "clearing dopamine." That is the wrong target.

The better target is retraining the reward system:

This is where the allostasis and circuit models converge. Koob and Le Moal emphasize the role of stress and reward dysregulation [10]. Koob and Volkow emphasize preoccupation and negative affect [11]. Volkow and colleagues emphasize weaker frontal control and stronger cue-driven motivation [4]. Those are different ways of describing the same practical truth: recovery is easier when the brain is no longer being asked to fight the old learning pattern alone.

That is also why recovery can feel flat at first. The old reward pathway may still be highly reactive, while ordinary life has not yet regained enough salience. The person is not broken. The reward landscape has changed.

Assessment

This is the first question in our assessment. It is for people trying to leave pornography and related screen-based sexual behavior, and it helps identify what is still holding the behavior in place.

What does this behavior still give you that you don't want to lose?

This fifth figure illustrates the broader network of reward dysregulation, pulling together the concepts of allostasis, stress, and shifted baseline during the addiction cycle.

This final composite brings all five figures together. It serves as a visual summary of the pathway: the core nodes, the prediction-error shift, the cue-reactivity, the transition to habit, and the broader dysregulation.

For a broader explanation of the compulsive loop, see Dopamine Addiction: What People Mean, What the Brain Is Actually Doing, and How Recovery Works.

If the phrase "dopamine reward pathway" still feels abstract, use this version:

The brain notices something important, predicts what will happen next, and remembers what worked.

That is the reward pathway at a functional level.

When the pathway is healthy, it helps you learn from natural rewards and move on. When it is overtrained, it can keep pulling attention toward the same cue, the same urge, and the same behavior even when the payoff is no longer worth it [1]-[5], [7]-[10], [13].

That is why the pathway matters so much for addiction and recovery. It is not just a pleasure circuit. It is a learning circuit, a motivation circuit, and, when things go wrong, a compulsion circuit.

It is the brain reward system, or reward circuit, that helps you learn what is rewarding, what to approach, and what to repeat. When people ask about the reward center of the brain, reward circuit of the brain, reward circuitry, reward circuitry of the brain, or reward centers of the brain, they are usually pointing to this broader network rather than one isolated spot [3], [6], [11].

The ventral tegmental area (VTA), nucleus accumbens (NAc), ventral pallidum, prefrontal cortex, amygdala, hippocampus, and related striatal circuits are the main players. If your question is where is dopamine produced, where is dopamine produced in the brain, where is dopamine made in the brain, or what body part releases dopamine, the short answer is that dopamine neurons, or dopaminergic neurons, are concentrated in midbrain regions such as the VTA and substantia nigra. If your search is literally how is dopamine made, dopamine is made from tyrosine via L-DOPA and then sent through dopamine pathways, or dopaminergic pathways, into the broader reward circuit [3], [11]-[13].

The best-known reward route is the mesolimbic dopamine pathway, also called the mesolimbic system, mesolimbic dopamine system, mesolimbic reward pathway, mesolimbic dopaminergic pathway, dopaminergic mesolimbic pathway, mesolimbic dopaminergic system, or mesolimbic DA pathway. It runs from the VTA to the nucleus accumbens. When you include prefrontal projections, people often use terms like mesocorticolimbic pathway or mesolimbocortical pathway. Together, these form the core dopamine reward system, dopaminergic reward system, and brain reward pathway discussed in addiction science [3], [11], [13].

Dopamine is better described as a neuromodulator than a simple on-or-off signal. Its effect depends on receptor type, target cell, and circuit context. That is why questions framed as dopamine mechanism, dopamine mechanism of action, or even dopamine MOA need a circuit answer rather than a one-word label [1], [4], [6].

Because reward mechanisms include liking, wanting, learning, prediction, and motivation. Dopamine is one of the key neurotransmitters in that process, and dopamine motivation is a big part of the story. If you are reading more broadly about neurotransmitters and dopamine, the main point is that the full dopaminergic system works in concert with broader reward circuitry rather than acting alone [1]-[3], [6].

In chemistry terms, dopamine is a catecholamine neurotransmitter. If your search is closer to dopamine structure, dopamine chemical structure, dopamine class, or dopamine classification, that is a valid background question, but it does less explanatory work than understanding where dopamine acts in the reward pathway [3].

Repeated learning makes cues more powerful. Over time, the cue can trigger wanting before the reward appears, which is why cravings can feel automatic and overpowering even if the actual behavior is no longer very enjoyable. This is also why recovery is better framed as changing the reward circuit and dopamine sensitivity than simply asking how to decrease dopamine levels [2], [4], [5], [10]-[14].

[1] G. Di Chiara and V. Bassareo, "Reward system and addiction: what dopamine does and does not do," Current Opinion in Pharmacology, vol. 7, no. 1, pp. 69-76, 2007, doi: 10.1016/j.coph.2006.11.003.

Essential review explaining that dopamine is about incentive learning and motivation, not just simple pleasure.

[2] R. Keiflin and P. H. Janak, "Dopamine prediction errors in reward learning and addiction: From theory to neural circuitry," Neuron, vol. 88, no. 2, pp. 247-263, 2015, doi: 10.1016/j.neuron.2015.08.037.

Foundational paper on reward prediction error and how dopamine signals shift from reward to predictive cues.

[3] K. H. Taber, D. N. Black, L. J. Porrino, and R. A. Hurley, "Neuroanatomy of dopamine: Reward and addiction," The Journal of Neuropsychiatry and Clinical Neurosciences, vol. 24, no. 1, pp. 1-4, 2012, doi: 10.1176/appi.neuropsych.24.1.1.

Clear overview of the anatomical pathways, separating liking, wanting, and learning.

[4] N. D. Volkow, G.-J. Wang, J. S. Fowler, D. Tomasi, and F. Telang, "Addiction: Beyond dopamine reward circuitry," PNAS, vol. 108, no. 37, pp. 15037-15042, 2011, doi: 10.1073/pnas.1010654108.

Explains addiction as an imbalance involving reward, memory, and executive control circuits, not just a dopamine overflow.

[5] N. D. Volkow, J. S. Fowler, G.-J. Wang, R. Baler, and F. Telang, "Imaging dopamine's role in drug abuse and addiction," Neuropharmacology, vol. 56, suppl. 1, pp. 3-8, 2009, doi: 10.1016/j.neuropharm.2008.05.022.

Connects dopamine signaling to the intense motivational salience of cues in addiction.

[6] S. Cooper, A. J. Robison, and M. S. Mazei-Robison, "Reward circuitry in addiction," Neurotherapeutics, vol. 14, no. 3, pp. 687-697, 2017, doi: 10.1007/s13311-017-0525-z.

Detailed look at the VTA-NAc pathway as a node in a much broader glutamatergic network.

[7] K. Starcke, S. Antons, P. Trotzke, and M. Brand, "Cue-reactivity in behavioral addictions: A meta-analysis and methodological considerations," Journal of Behavioral Addictions, vol. 7, no. 2, pp. 227-238, 2018, doi: 10.1556/2006.7.2018.39.

Strong support for the role of cue-reactivity in non-substance addictions like gambling and gaming.

[8] H. R. Noori, A. C. Linan, and R. Spanagel, "Largely overlapping neuronal substrates of reactivity to drug, gambling, food and sexual cues: A comprehensive meta-analysis," European Neuropsychopharmacology, vol. 26, no. 9, pp. 1419-1430, 2016, doi: 10.1016/j.euroneuro.2016.06.013.

Shows that the brain treats various addiction cues similarly at the circuit level.

[9] D. J. Kuss and M. D. Griffiths, "Internet and Gaming Addiction: A Systematic Literature Review of Neuroimaging Studies," Brain Sciences, vol. 2, no. 3, pp. 347-374, 2012, doi: 10.3390/brainsci2030347.

Confirms reward pathway involvement in modern digital and screen compulsions.

[10] G. F. Koob and M. Le Moal, "Drug addiction, dysregulation of reward, and allostasis," Neuropsychopharmacology, vol. 24, no. 2, pp. 97-129, 2001, doi: 10.1016/S0893-133X(00)00195-0.

Classic allostasis model explaining how repeated reward seeking leads to stress activation and a shifted baseline.

[11] G. F. Koob and N. D. Volkow, "Neurobiology of addiction: A neurocircuitry analysis," Lancet Psychiatry, vol. 3, no. 8, pp. 760-773, 2016, doi: 10.1016/S2215-0366(16)00104-8.

Expands the allostasis model into distinct stages: binge/intoxication, withdrawal/negative affect, and preoccupation/anticipation.

[12] B. J. Everitt and T. W. Robbins, "Neural systems of reinforcement for drug addiction: From actions to habits to compulsion," Nature Neuroscience, vol. 8, no. 11, pp. 1481-1489, 2005, doi: 10.1038/nn1579.

Important review showing how control shifts from flexible, goal-directed behavior to rigid, habit-based compulsion.

[13] N. D. Volkow, R. A. Wise, and R. Baler, "The dopamine motive system: Implications for drug and food addiction," Nature Reviews Neuroscience, vol. 18, no. 12, pp. 741-752, 2017, doi: 10.1038/nrn.2017.130.

A broad look at how dopamine drives motivation across multiple survival and reward categories.

[14] D. O'Tousa and N. Grahame, "Habit formation: Implications for alcoholism research," Alcohol, vol. 48, no. 4, pp. 327-335, 2014, doi: 10.1016/j.alcohol.2014.02.004.

Direct explanation of how repeated reinforcement turns actions into automatic, cue-triggered habits.

Choose the answer that fits best to see what is still holding the behavior in place.

Start assessmentNo account needed to start. Your answers are anonymous.